What is the best way to help an AI understand that the terms ‘airplane’, ‘aircraft’, and ‘jet’ all refer to the same thing? By creating a thesaurus… automatically.

The Horizon Europe Project, MOTIVATE XR, is investigating how Extended Reality (XR) can revolutionise industrial training and remote maintenance support. Existing industrial documentation is often vast and intricate, posing significant challenges for creating effective training resources. As part of this initiative, we are developing an AI-powered tool designed to read, interpret, and convert these complex documents into accessible knowledge graphs.

Knowledge graphs serve as structured frameworks that represent entities and the relationships between them, enabling both humans and AI systems to efficiently navigate, interpret, and utilise complex data. In this context, constructing knowledge graphs from industrial documentation empowers users to leverage AI tools for the streamlined creation of XR training scenarios, effectively transforming dense technical materials into accessible and actionable resources for training and support.

Bridging the gap between dense industrial documentation and accessible training materials requires more than simply converting data: it calls for a genuine understanding of language and meaning. Although knowledge graphs, powered by Natural Language Processing (NLP) and Large Language Models (LLM), enable humans and machines to decipher complex, domain-specific information, there remains a critical need for machines to look past mere words and grasp deeper, semantic meaning.

Current AI applications, from chatbots to semantic search, demonstrate impressive capabilities, but real understanding emerges only when these systems grasp the deeper relationships between words and concepts. Without this, their knowledge remains superficial, limiting both the richness and usefulness of the resulting knowledge representations.

That is where a thesaurus becomes essential.

A thesaurus is a resource that organises words according to their meaning. It groups synonyms together and often arranges terms from the most general to the most specific concepts. While it has traditionally been used by writers to expand their vocabulary, a thesaurus in Natural Language Processing (NLP) helps systems to connect different words that refer to the same idea. Think of it as a dictionary of synonyms.

For example, humans instantly understand that “plane”, “aircraft”, and “jet” are interchangeable in many contexts. However, for a machine, these are just different sequences of characters, unless it has access to the semantic relationships found in a thesaurus.

This kind of semantic grouping is critical in AI and domain-specific applications. A thesaurus enables:

- Entity normalisation: grouping synonyms of the same term;

- Better information extraction and classification: making it easier to find and categorise information;

- A clearer understanding of domain-specific language: helping machines understand specialised terms.

However, there is a problem: most thesauri are still created manually. Subject matter experts are asked to identify important terms, define synonyms and hierarchies, and organise them in a usable way. This process is time-consuming and repetitive, and it is often unclear even to the experts themselves. Many of them have not been trained in how to create a thesaurus, and the process can quickly become inefficient and inconsistent.

So, we asked ourselves: can we automate this process?

In this article, we present our approach to automating the generation of domain-specific thesaurus generation. Starting from a single subject-specific document, our aim was to:

- Extract relevant terms;

- Group synonyms together (including those that are not present in the text by generating them);

- Associate each group with a broader category.

Our goal is to give machines a better grasp of meaning and save humans a lot of time.

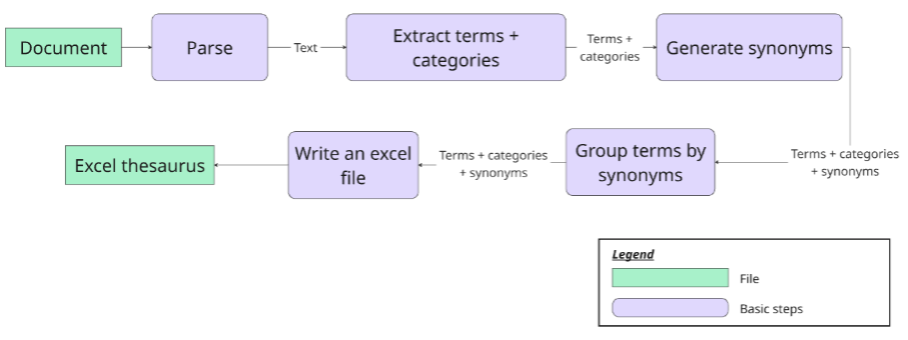

Our Approach: The Basic Pipeline

To automate the creation of the thesaurus from a technical document, we first implemented a basic pipeline. The idea was to transform raw text into a structured Excel thesaurus with minimal human intervention as illustrated in the following diagram. Here’s how it works, step by step:

1. Document Parsing

First, we take a domain-specific (technical) document and transform it into a clean, structured text that can be reliably analysed.

2. Term and Category Extraction

From the parsed text, we identify terms relevant to a particular field, categorising them according to predefined criteria. These categories help to organise the terms and make the resulting thesaurus more useful for subsequent tasks. For instance, when working with medical documents, we might use categories such as “diseases,” “treatments,” and “symptoms.” Ultimately, each extracted term is associated with the category used to identify it.

3. Synonym Generation

We then try to enrich the list of terms extracted in the previous step. To do so, we generate potential synonyms for each term. Depending on the domain, this step may rely on pre-trained language models, lexical databases, or custom synonym sets.

4. Synonym Grouping

Next, we group terms that have the same or similar meanings. This clustering step reduces redundancy and establishes the core structure of the thesaurus by creating synonym lists.

5. Excel Export

Finally, all the information – terms and their associated synonyms and categories – is written in an Excel file. This format makes the output easy to read, edit, and use for both technical and non-technical users.

What Makes our Approach Unique

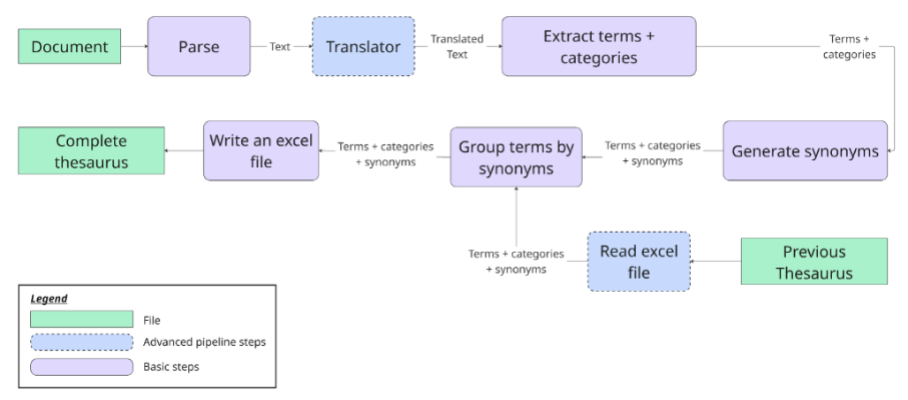

To address the multilingual challenges often encountered in NLP applications, we propose a more advanced version of our pipeline. This includes not only a translation step allowing the system to process non-English documents, but also the ability to merge two thesauri into a single unified resource.

In our case, we use English as the pivot language, because it is the most widely used language in many NLP contexts. However, this choice is flexible: you can select any language as the central language of your thesaurus. Please note that this decision will influence several technical aspects of the pipeline, including the choice of language models, synonym sources, and translation tools.

This advanced pipeline is based on the same core structure, shown in the diagram above and introduces two major new capabilities:

1. Translator

A translation step is added right after parsing, enabling the system to handle documents written in languages other than the thesaurus target language. If a document is written in a language other than the target language, the text is automatically translated before continuing through the pipeline.

2. Thesaurus Merging

If an existing thesaurus is available and we want to integrate newly extracted terms into it, rather than creating a new, separate, potentially conflicting file, we can use the existing Excel file as input. The pipeline will read this file, add any new terms that are not already present, and update the thesaurus accordingly. The existing thesaurus must have the same predefined format.

Challenges

Although the pipeline is intended to automate thesaurus generation, each stage presents its own set of technical challenges. Every phase, from raw document input to structured output, requires careful handling to ensure both performance and relevance. Below are the main challenges you may encounter at each stage of the automated process.

- Inconsistent Formatting:

During the document parsing stage, domain-specific documents may be in a variety of formats, such as PDF, HTML or DOCX, each with its own structure and associated issues. This variability poses a challenge when designing the parser. One solution is to implement a modular parsing system that includes different parsers tailored to each file type. Alternatively, a more robust, unified system could be used to handle multiple formats intelligently. Either way, this complexity must be considered from the outset.

- Term and Category Extraction – Ambiguity and Domain Sensitivity

The quality of the generated thesaurus depends heavily on the relevance and specificity of the provided semantic categories. It is challenging to define clear, well-structured, and non-overlapping categories, and this often requires input from domain experts.

Once the categories have been established, the next challenge is to extract meaningful terms. This step requires models that can understand the vocabulary of the target domain. General-purpose NLP models may miss specialised expressions or introduce irrelevant terms. Therefore, it is essential to choose a language model that is well-suited to the domain or using effective prompt engineering to adapt it, in order to improve term relevance and minimise false positives.

- Synonym Generation – Confidentiality and Specificity:

One of the main concerns at this stage is data confidentiality. If sensitive or proprietary documents are involved, the synonym generation process must be run locally, requiring lightweight models that still deliver good performance.

Furthermore, general-purpose language models may overlook subtle distinctions in domain-specific language. To generate useful synonym sets, it is important to select models that are suited to the field and to apply effective prompt engineering techniques that incorporate domain-specific context.

- Translation – Adaptability and Context Management:

The multilingual version of the pipeline introduces translation, which brings its own challenges. Ideally, the system would support multiple language pairs (e.g., European languages to English and vice versa), but the quality of translations can vary across languages and domains.

Additionally, language models are limited by a fixed context window. This means that documents must be divided into smaller chunks without altering the meaning or structure. Effective chunking strategies are crucial for preserving coherence and preventing translation errors that could impact subsequent processing.

- Excel Export – Format Consistency and Reusability:

Maintaining a clean, structured and consistent format of the output Excel file is important to ensure reusability, especially when multiple updates are made over time. Poor formatting can lead to confusion and duplication, as well as increasing the need for manual corrections, thus defeating the purpose of automation.

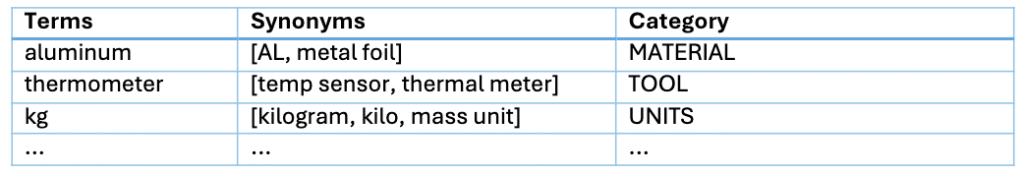

An example of a use case:

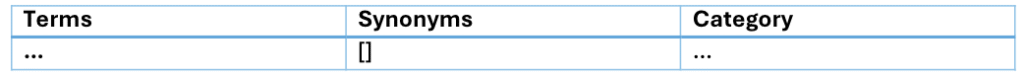

Here is an example of a part of the thesaurus generated using the default parameter values of the main function inside the notebook.

- Evaluation

Evaluating the quality of an automatically generated thesaurus is a complex, yet essential. As the task is subjective and context-dependent, traditional metrics are often inadequate.

In our case, we focus on human evaluation method. Domain experts manually reviewed a subset of the terms, their assigned categories, and the generated synonyms. They then provided feedback on the accuracy, relevance, and coherence of the groupings. This step was crucial for validating both the extraction and the synonym generation stages.

Although our evaluation was human-driven, it is worth noting that the LLM-as-a-judge approach, in which a large language model is used to automatically assess outputs, is emerging as a promising method in NLP. Prompting an LLM to evaluate the quality of synonyms or suggest better alternatives enables us to scale evaluations and gain consistent, reproducible feedback. Although it cannot replace expert validation, particularly in specialised domains, it offers a robust complementary strategy for future iterations.

Conclusion

By generating a thesaurus automatically from domain-specific documents, we can unlock powerful new possibilities across the NLP pipeline. This structured set of terms and their synonyms can be reused to automate and improve downstream tasks, such as knowledge graph construction, semantic searches, or document classification. Standardising terminology and linking related expressions makes it much easier for machines to “understand” the language of a specific domain.

As well as being a resource, the thesaurus can also be used as a tool for evaluation. For example, it can be used to evaluate the performance of entity extraction models: if the extracted terms do not align with the thesaurus, this may suggest problems with the model or gaps in domain coverage.

In short, creating a thesaurus is a crucial stepping stone, not the final step. It enhances both the automation and evaluation capabilities of numerous NLP applications, rendering them more robust, more interpretable, and more aligned with human understanding.

By standardising domain-specific terminology, the thesaurus strengthens graph RAG by enabling clearer knowledge graph construction and more precise semantic searches. For industrial training documentation, it ensures consistent language across materials, making information easier for both humans and AI to interpret and utilise. Ultimately, this unified resource accelerates automation and supports more robust, domain-aligned workflows.

Authors

Sopra Steria

Alice Petit, an AI engineer at Sopra Steria Group (since 2021) specializing in language processing and the explainability of AI models. Her main role within the project is the development of AI which accelerates content creation within VR/AR authoring tools.

Sopra Steria

Lucas Colomines holds a Master’s degree in Computer Vision and Machine Learning. His work spans a diverse range of optimisation challenges, including quantum computing, Rag systems, mathematical modeling, and computer vision. He has contributed to projects involving advanced algorithmic design and applied research, with a focus on bridging theoretical models and real-world applications.